Should You Even Trust Gemini’s Million-Token Context Window?

Imagine you’re tasked with analyzing your company’s entire database — millions of customer interactions, years of financial data, and countless product reviews — to extract meaningful insights. You turn to AI for help. You shove all of the data into Google Gemini 1.5, with its new 1 million token context length and start making requests, which it seems to be solving. But a nagging question persists: Can you trust the AI to accurately process and understand all of this information? How confident can you be in its analysis when it’s dealing with such a vast amount of data? Are you going to have to dig through a million tokens worth of data to validate each answer?

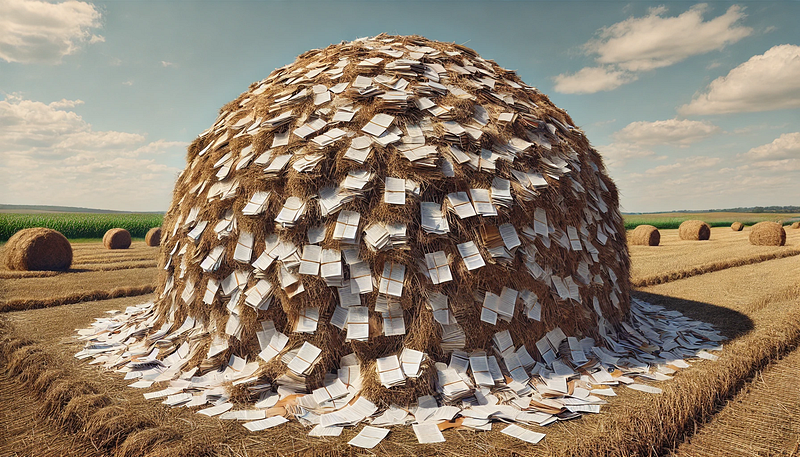

Traditional AI tests, like the well-known “needle-in-a-haystack” tests, fall short in truly assessing an AI’s ability to reason across large, cohesive bodies of information. These tests often involve hiding unrelated information (needles) in an otherwise homogeneous context (haystack). The problem is that it makes the focus on information retrieval and anomaly detection rather than comprehensive understanding and synthesis. Our goal wasn’t just to see if it could find a needle in a haystack, but to evaluate if it could understand the entire haystack itself.

Using a real-world dataset of App Store information, we systematically tested Gemini 1.5 Flash across increasing context lengths. We asked it to compare app prices, recall specific privacy policy details, and evaluate app ratings — tasks that required both information retrieval and reasoning capabilities. For our evaluation platform, we used LangSmith by LangChain, which proved to be an invaluable tool in this experiment.

The results were nothing short of amazing! Lets dive in.