My Bull Case for Prompt Automation

Recently, Andrej Karpathy did the Dwarkesh Patel podcast, and one of the stories he told stuck out to me.

He they were doing an experiment where they had an LLM-as-a-judge scoring a student LLM. All of a sudden, he says, the loss went straight to zero, meaning the student LLM was getting 100% out of nowhere. So either the student LLM achieved perfection, or something went wrong.

They dug into the outputs, and it turns out the student LLM was just outputting the word "the" a bunch of times: "the the the the the the the." For some reason, that tricked the LLM-as-a-judge into giving a passing score. It was just an anomalous input that gave them an anomalous output, and it broke the judge.

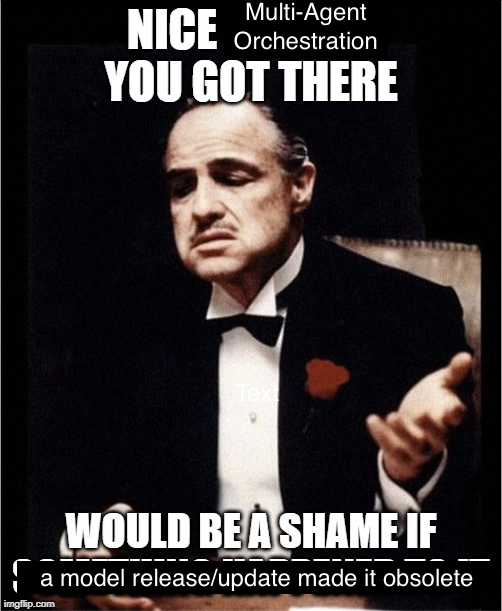

It's an interesting story in itself, just on the flakiness of LLMs, but we knew that already. I think the revelation for me here is that if outputting the word "the" a bunch of times is enough to get an LLM to perform in ways you wouldn't expect, then how random is the process of prompting? Are there scenarios where if you put "the the the the the" a bunch of times in the system prompt, maybe it solves a behavior, or creates a behavior you were trying to get to?

We treat prompting like we're speaking to an entity, and that if we can get really clear instructions in the system prompt, we can steer these LLMs as if they're just humans that are a little less smart. But that doesn't seem to be the case, because even a dumb human wouldn't interpret the word "the" a bunch of times as some kind of successful response. These things are more enigmatic than we treat them. It's not too far removed from random at this point.